Sadtalker AI is widely used to create AI Talking Images or avatars. Here, in this article, we’ll explore the two main Sadtalker free alternatives are Wav2Lip and D-ID.

Sadtalker Alternatives:

- Wav2Lip: It helps to create lip syncing and dub the video or improving the video quality.

- D-ID: D-ID is an online web application used to create AI talking avatars. It can convert static Images to talking videos.

What is Wav2Lip?

Wav2Lip is an impressive AI tool that enables accurate lip-syncing in videos. It will enable you to create deepfake content, dubbing, or enhancing video quality.

Features:

- High Accuracy: Wav2Lip achieves precise lip synchronization between video footage and target speech.

- Versatility: It works for any identity, voice, and language, making it suitable for diverse applications.

- CGI and Synthetic Voices: Wav2Lip handles CGI faces and synthetic voices seamlessly.

Wav2Lip Installation and Setup

3.1. Prerequisites

- Python 3.6: Create a new virtual Python environment (e.g., using

conda) with Python 3.6. - FFmpeg: Install FFmpeg using the following command:

sudo apt-get install ffmpeg

3.2. Cloning the Repository

Clone the Wav2Lip repository from GitHub: git clone https://github.com/Rudrabha/Wav2Lip

Install the required packages:pip install -r requirements.txt

3.3. Prepare Your Files

Before running Wav2Lip, ensure you have the following files:

- Model File: Two models are supported out of the box: Wav2Lip and Wav2Lip GAN. Download the desired model file.

- Video File: Choose an input video that meets the following conditions:

- High-quality video (480p or higher)

- Max duration of 20 seconds

3.4. Running Wav2Lip

Execute the following command to merge audio and video streams:

python inference.py --checkpoint_path <path-to-model-file> --face <path-to-video-file> --audio <path-to-audio-file>

Example command for clarity:

python inference.py --checkpoint_path /path/to/wav2lip_gan.pth --face /path/to/input_video.mp4 --audio /path/to/input_audio.wav

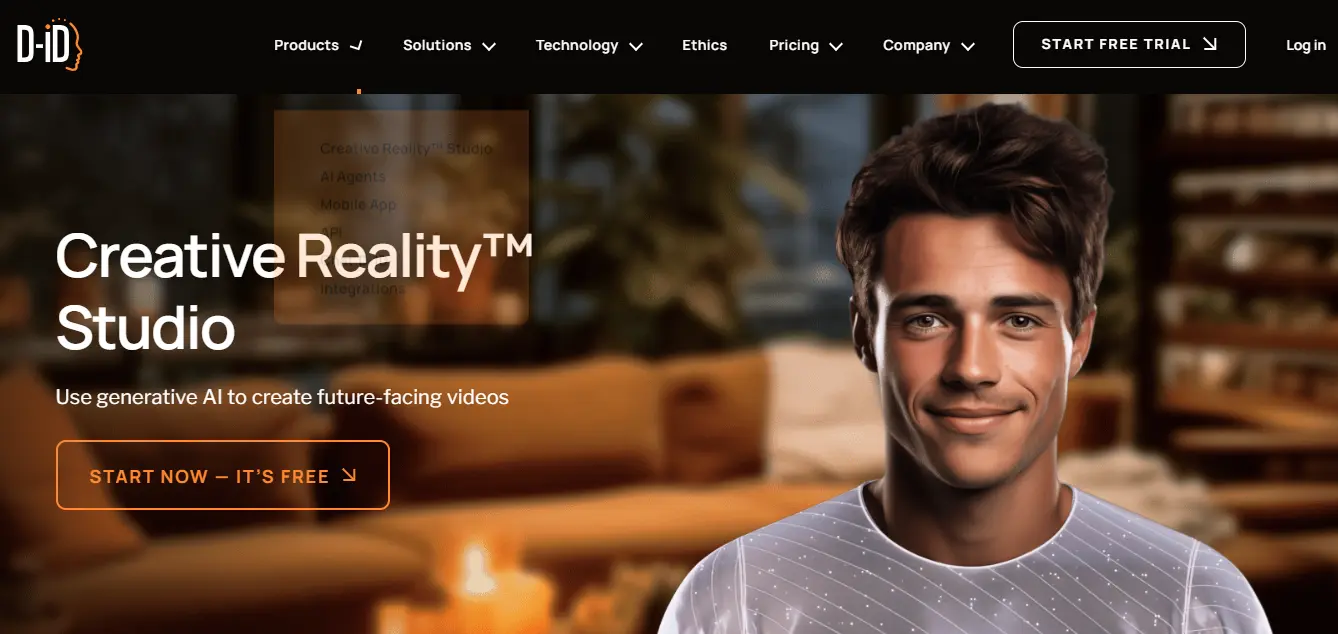

What is D-ID?

D-ID is a platform used to create AI talking videos featuring personalized presenters. With D-ID, users can upload photos or create avatars from scratch, and then animate them to deliver messages or presentations.

The platform offers various options for scripting, voice selection, expression, text overlays, and background design, allowing for customized video content creation.

How to use D-ID Studio?

1. Create Your Presenter:

- Log in to the Creative Reality™ Studio and click “Create Video.”

- Choose from existing presenters or upload your own photo.

- Use clear, front-facing photos for best results.

2. Select Canvas Layout:

Choose a layout (wide, square, or vertical) for your video’s presentation.

3. Add Your Script:

Write a script or use the AI assistance for generating text (up to 5 minutes or 700 words).

4. Language Selection:

Choose from over 100 text-to-speech languages and accents to match your script.

5. Give Your Presenter a Voice:

Select gender and voice from different options until satisfied.

6. Choose Your Avatar’s Expression:

Select from happy, serious, surprised, or neutral expressions.

7. Add Text Overlays:

Insert text, select font, style, alignment, color, size, and effects.

8. Background Selection:

Choose solid colors or pre-made designs for the canvas background.

9. Positioning, Layers, and Transparency:

Adjust the placement, layers, and transparency of elements in your design.

10. Generate the Video:

Name your video and click “Generate Video” to start the creation process.

11. Review and Export:

Ensure sync between avatar movements and speech, accuracy of content, and overall presentation.

Finalize and export the video, saving it to your Video Library as an MP4 file or share directly to social media.