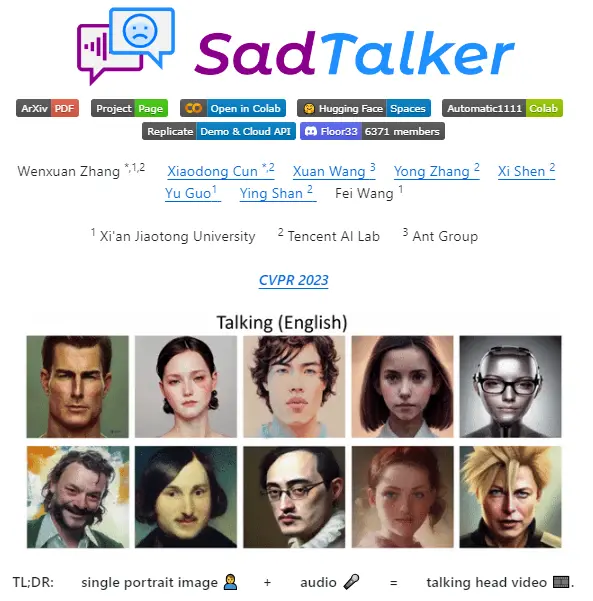

SadTalker is an innovative project presented at CVPR 2023, focusing on generating realistic 3D motion coefficients for audio-driven single-image talking face animations.

We have used gfpgan with Sadtalker, and this article explores the details of SadTalker and its integration with GFPGAN.

By using deep learning techniques you can create SadTalker creates expressive talking avatars from a single static image, driven by audio cues.

A crucial component of this process is GFPGAN (Generative Facial Prior-Generative Adversarial Network), which enhances the quality and realism of the generated faces.

What is SadTalker?

SadTalker is an AI research project aimed at producing lifelike talking faces from a single input image, driven by audio input. It uses advanced deep learning models to generate expressive and natural-looking facial animations that sync accurately with the provided audio.

The project addresses the challenge of creating realistic talking avatars, which has significant applications in entertainment, virtual reality, social media, and more.

GFPGAN:

GFPGAN plays a pivotal role in SadTalker by improving the quality of the generated facial animations.

It is a neural network designed to restore and enhance facial images, ensuring that the animated avatars look realistic and detailed.

By incorporating a pre-trained face prior model, GFPGAN can accurately reconstruct fine facial features and textures, making the talking avatars more convincing and expressive.

How to Use SadTalker with GFPGAN?

To use the power of SadTalker and GFPGAN, follow these steps:

Download SadTalker:

Clone the SadTalker repository from GitHub:

git clone https://github.com/Winfredy/SadTalker.gitDownload the necessary checkpoints and GFPGAN models from the downloads section of the SadTalker repository.

Install Dependencies:

Navigate to the SadTalker directory and install the required dependencies. Typically, this can be done using pip:

pip install -r requirements.txtRun SadTalker:

Execute start.bat from Windows Explorer (make sure to run it as a normal, non-administrator user).

This will launch a Gradio-powered WebUI demo where you can interact with the system to create talking avatars.

Configure Parameters:

In the WebUI, you can set the face resolution for the generated avatars. Options typically include 256×256 or 512×512 resolution. Higher resolutions (512×512) provide better quality but require more computational resources.

Select GFPGAN as the face enhancer to improve the final quality of the animated face.

Additional Tips

Documentation and Support:

If you encounter issues or need further guidance, refer to the official SadTalker documentation available on GitHub. The repository includes detailed instructions, troubleshooting tips, and examples.

Pre-trained Models:

Explore the repository to find pre-trained models and checkpoints that can enhance the performance and quality of the generated animations.

Hardware Requirements:

Ensure you have a compatible GPU to handle the computational demands of generating high-resolution talking faces.

Applications and Future Directions

SadTalker, combined with GFPGAN, opens up numerous exciting applications:

Entertainment and Media:

Create engaging and interactive talking avatars for movies, video games, and virtual reality experiences.

Social Media and Content Creation:

Enable influencers and content creators to produce dynamic and lifelike animated videos from static images.

Virtual Assistants and Chatbots:

Develop more human-like virtual assistants and chatbots that can express emotions and engage users more effectively.

Historical Preservation:

Animate historical photos to bring historical figures to life, providing a more immersive and educational experience.

As the technology behind SadTalker and GFPGAN continues to evolve, we can expect even more realistic and versatile applications.

Conclusion

GFPGAN is the important component of Sadtalker to enhance and improve the the final animated video quality. GFPGAN plays a crucial role to enhance the quality and realism of the generated avatars.